I did it again. I went in the different direction from “common sense” and used dynamic memory allocation in my embedded products. Many expert embedded programmers would say “No, you should not”, however if you implemented a correct allocator why not?

The major concerns about dynamic memory in microcontroller is:

- The allocation/deallocation time is unpredictable.

- Memory fragmentation that leads unusable blocks.

- The memory requirement is unpredictable.

Scary? Yes it is.. But, if the given bottlenecks were fixed, dynamic allocation would make developers life a lot easier. Before delving into how to solve those problems, lets have a look at what dynamic allocation offers us:

String class

First of all, a string class that handles the string and being able to write the following would be very nice:

String myDearString = "My Dear String"; String myOtherString = myDearString + " makes my life easier"; if (myOtherString.startsWith(myDearString)) ..

I’ll write details of my String class in another post. To be fair, I just want to say myDearString is not allocated in heap, because string literals are kept in ROM, String class exploits that by looking at the address of argument, and just keeps a pointer to it.

myOtherString is a sum of two strings (actually two literal strings). The class allocates enough RAM, and combines the chunks, leading “My Dear String makes my life easier”.

Message Queues

In Awin library (a GUI library), many messages are passed to application: Keyboard presses, releases, mouse movements etc. Those are different types with nearly similar sizes. If I didn’t used heap, I should create memory pools for those. Another layer of burden..

Dynamic Data

Again in Awin library, the clipping regions are calculated on the fly. For those haven’t cared what clipping region is: Suppose the following, a button inside window:

Window manager has two options to draw whole window: First draws Window area, which erases Button area, than draws Button area. This causes two problems: Unnecessary drawing the area under the Button, and unnecessary flicker. If you don’t count it as an effect though.

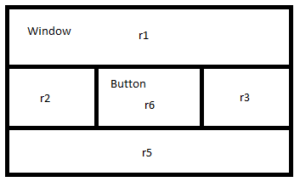

The window manager splits the area into areas that don’t have intersections as following:

Than calls draw methods of each class with given regions.. Because the draw methods do not erase what other methods have done, this method save CPU time (read it as speed, less power, longer battery time) and flicker at a certain leve as well.

Suppose the application made another objects, let say, a label visible. Now the clipping region map would be different..

To support dynamic (did I say dynamic) mapping of clipping regions, I would have created another memory pool for clipping subsystem. Which is managing another memory.

Zero Configuration

For years, when I had to use the code other people created for embedded systems, such as Bluetooth LE, FAT, protocol handling libraries, I had to deal with ugly macros to start up. To turn on this feature write this macro, to set buffer size, define this macro, to define how many tasks your application would use set this macro.

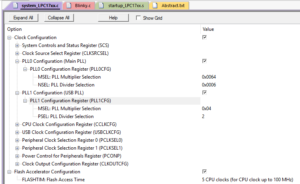

Because it creates a lot of errors, Keil made a configuration page for its macros:

I cannot blame that is bad. In the past I used it to configure my builds to turn on and off features. However, it is just a neat way to define macros that’s it..

What if the system configures it self and gives the developer a better interface. For instance to set a serial ports transmit fifo, wouldn’t be more readable if we write:

serialPort.setTxFifoSize(1024);

Or to create a new thread:

Thread thread; thread.start(myThreadFunction);

It creates a thread with default size of stack. You may set the stack size as well:

Thread thread; thread.setStackSize(512); thread.start(myThreadFunction);

I know, those last two samples are static allocations that uses dynamic allocation. But neat! Isn’t it?